The NVIDIA GeForce RTX 3060 Ti posts strong performances in CUDA, OpenCL and Vulkan benchmarks - NotebookCheck.net News

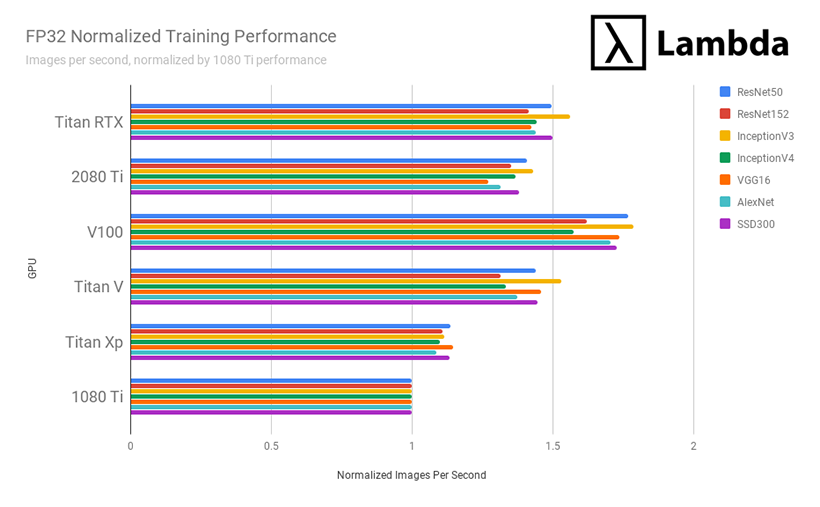

Should I get rtx 3060 or 3070 if I want to do machine learning? Is vram more important or tensor cores more important? - Quora

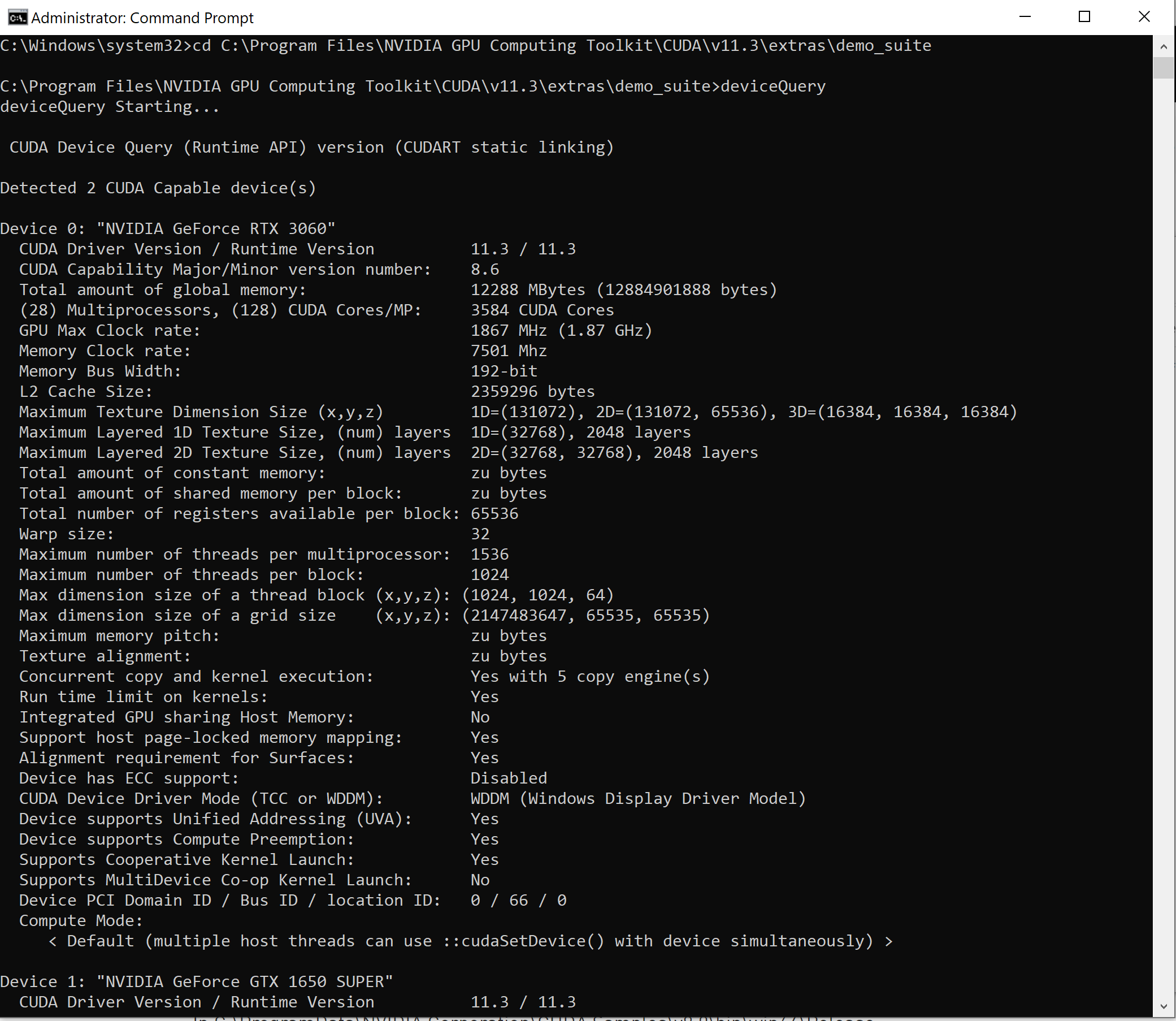

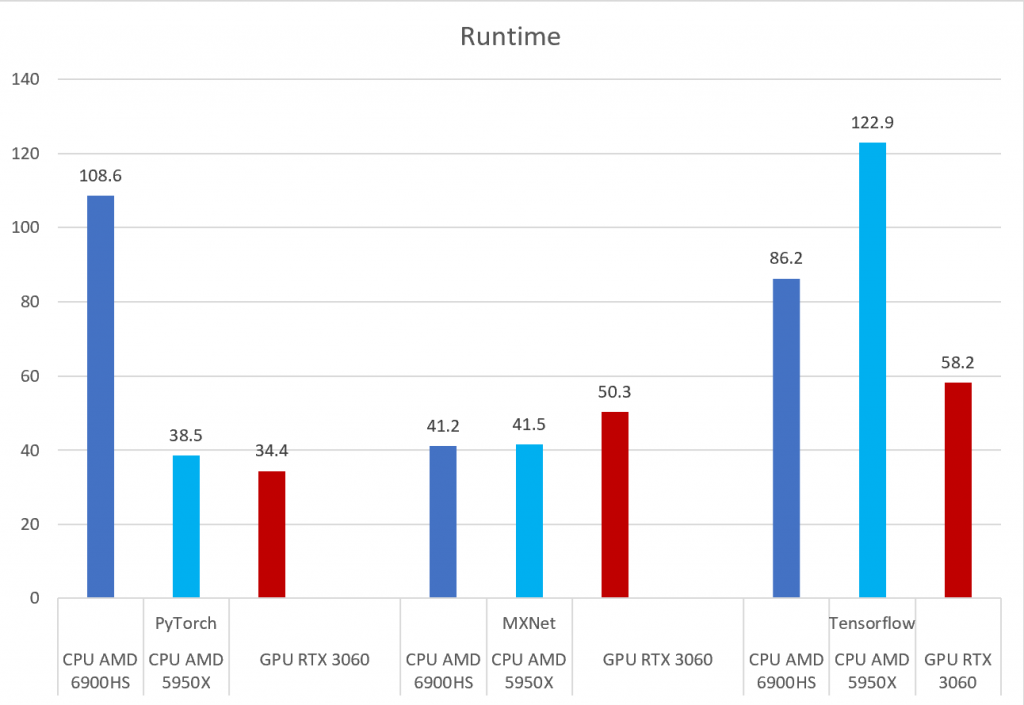

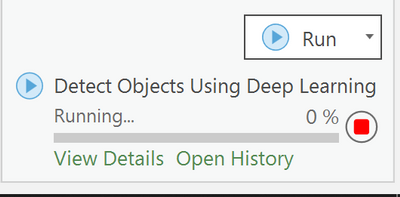

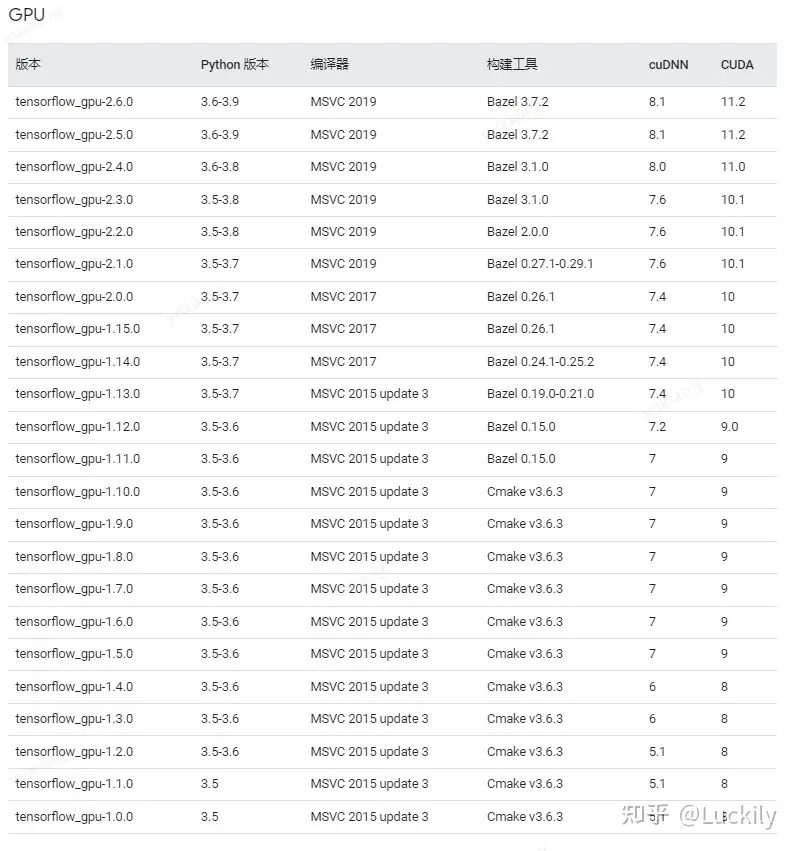

PyTorch, Tensorflow, and MXNet on GPU in the same environment and GPU vs CPU performance – Syllepsis

maderix on Twitter: "Looks like Intel ARC gpus hace better acceleration on Topaz enhance than current gen RTX 3060. That seems encouraging for consumers. I wonder if it would translate to better

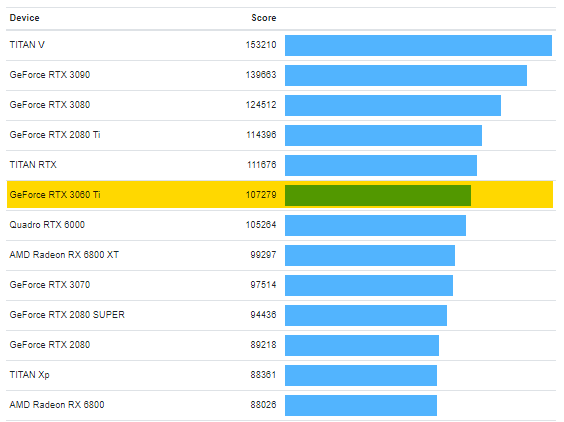

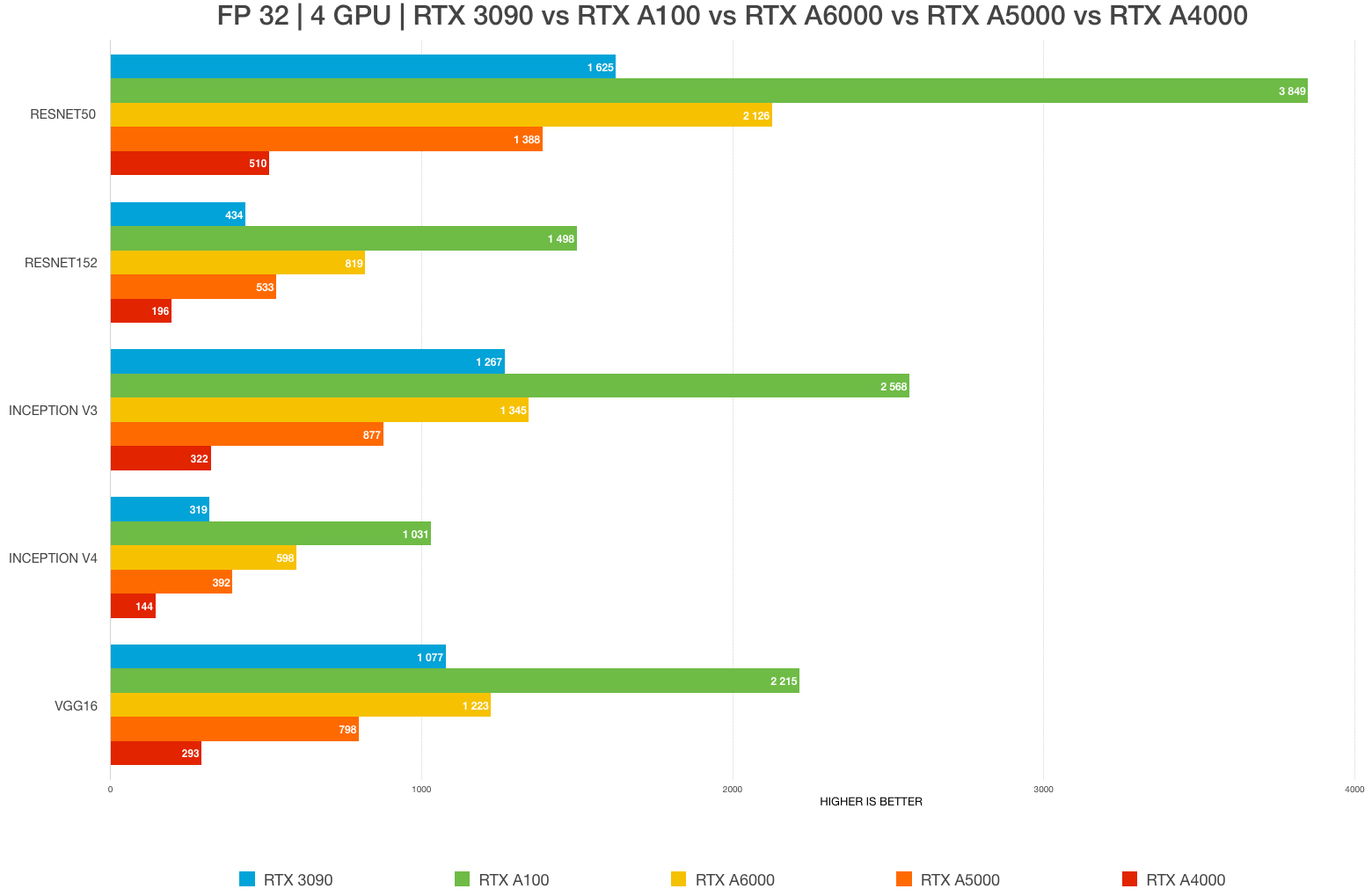

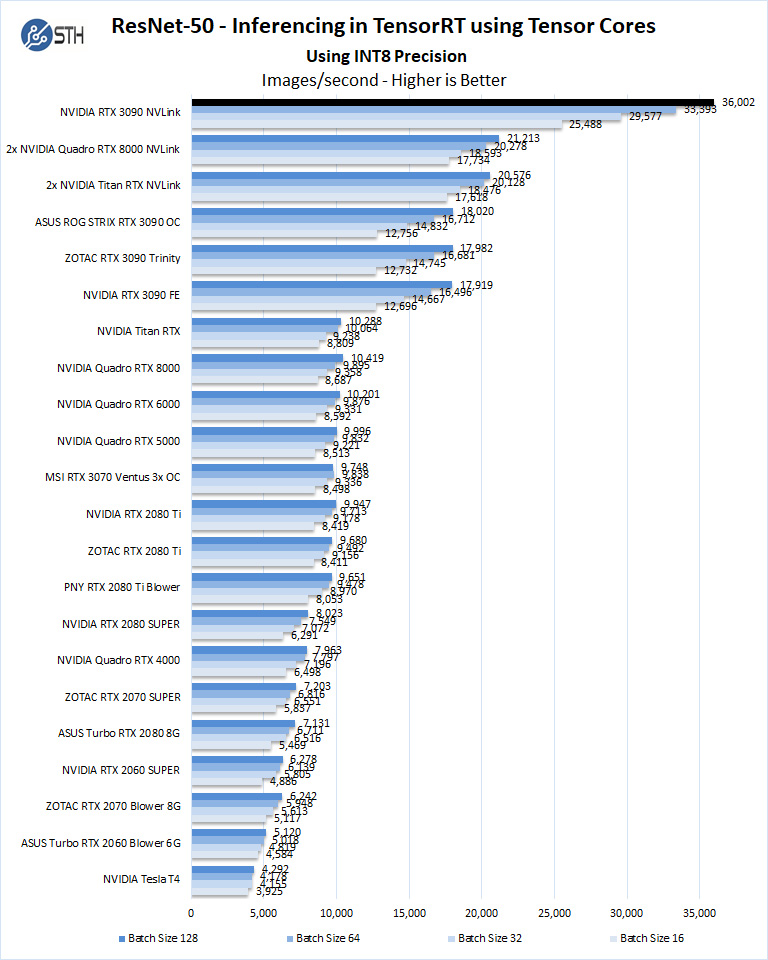

Best GPU for AI/ML, deep learning, data science in 2023: RTX 4090 vs. 3090 vs. RTX 3080 Ti vs A6000 vs A5000 vs A100 benchmarks (FP32, FP16) – Updated – | BIZON

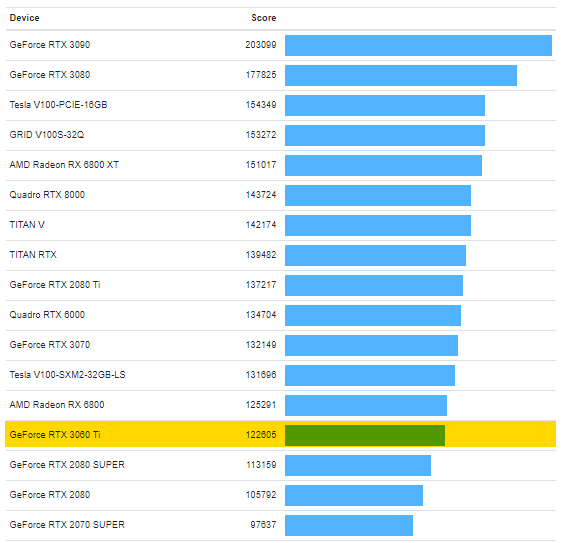

The NVIDIA GeForce RTX 3060 Ti posts strong performances in CUDA, OpenCL and Vulkan benchmarks - NotebookCheck.net News

![D] Which GPU(s) to get for Deep Learning (Updated for RTX 3000 Series) : r/nvidia D] Which GPU(s) to get for Deep Learning (Updated for RTX 3000 Series) : r/nvidia](https://external-preview.redd.it/5u8jDdRCaP-A20wEDShn0DFiQIgr2DG_TGcnakMs6i4.jpg?auto=webp&s=25a683283f0d0a367ff1476983de4b134c72d57f)

![D] RTX 3060 vs Jetson AGX: BERT-Large Benchmark : r/MachineLearning D] RTX 3060 vs Jetson AGX: BERT-Large Benchmark : r/MachineLearning](https://external-preview.redd.it/UmPwf-lVOTMfos8VeIhlSvohfJUCZzz3lLChRvIV6Zo.jpg?auto=webp&s=436d0c3bd2f2fa20ecaf54f3d27b7a0b54faaffc)